|

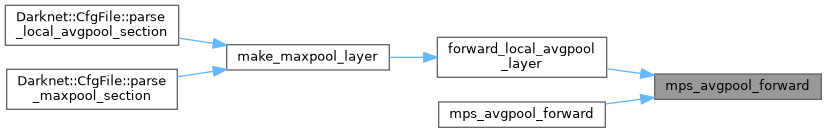

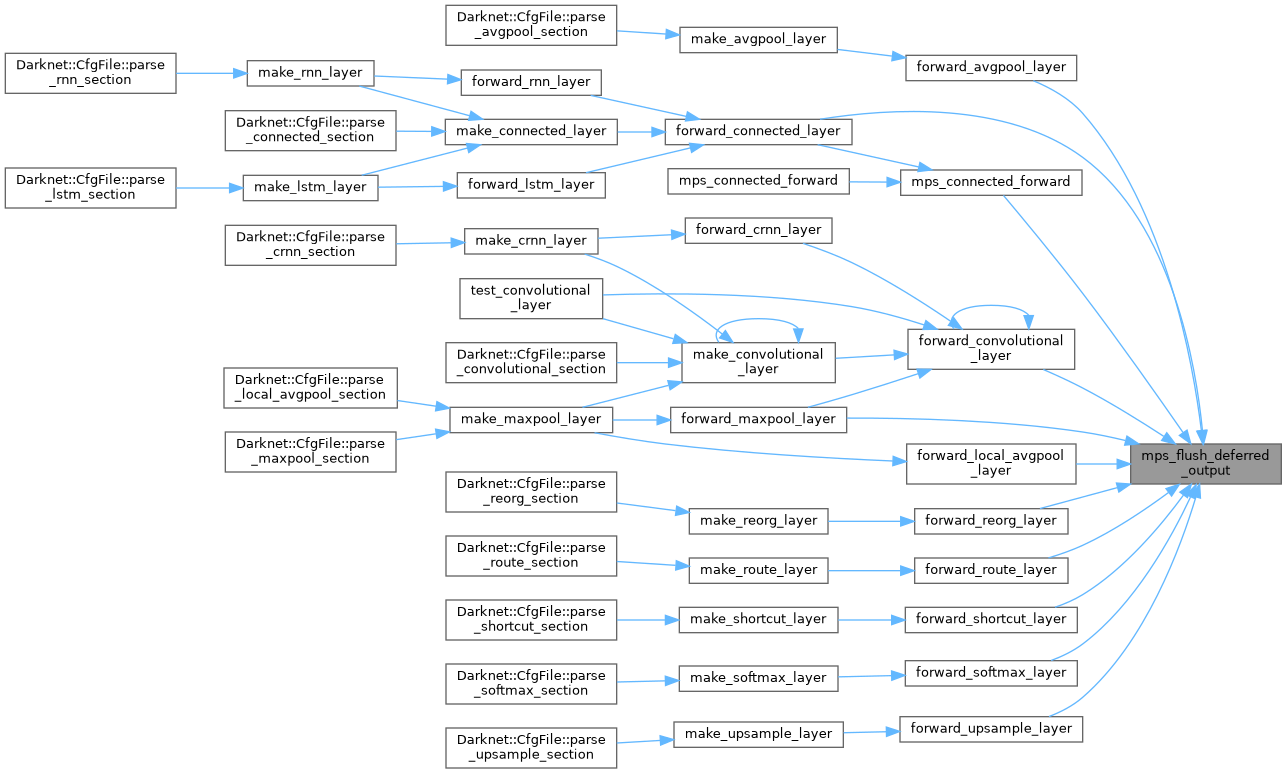

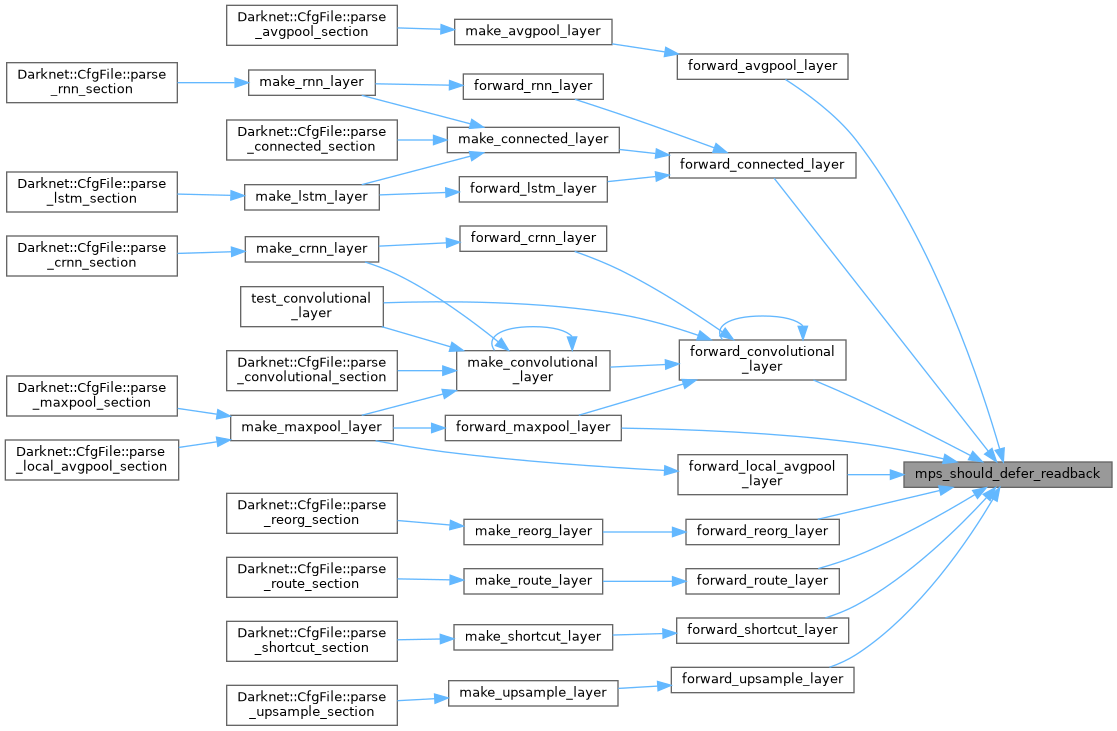

| bool | mps_avgpool_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, bool defer_readback, const char **reason) |

| |

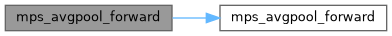

| static bool | mps_avgpool_forward (const Darknet::Layer &l, const float *input, float *output) |

| |

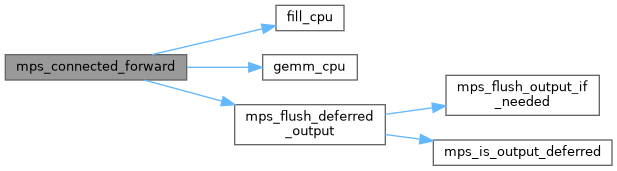

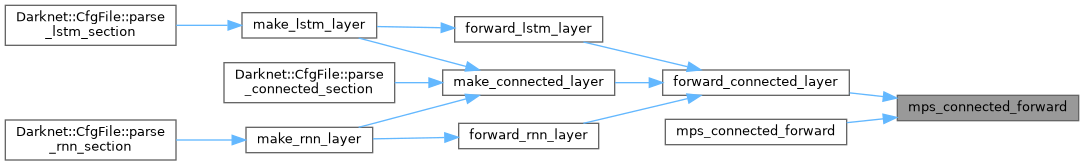

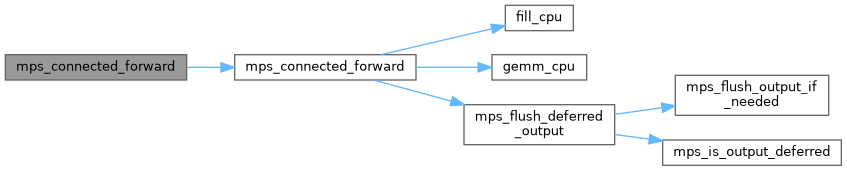

| bool | mps_connected_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, bool defer_readback, bool *activation_applied, const char **reason) |

| | Try to execute connected layer forward using MPS (batchnorm/activation).

|

| |

| static bool | mps_connected_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, const char **reason) |

| |

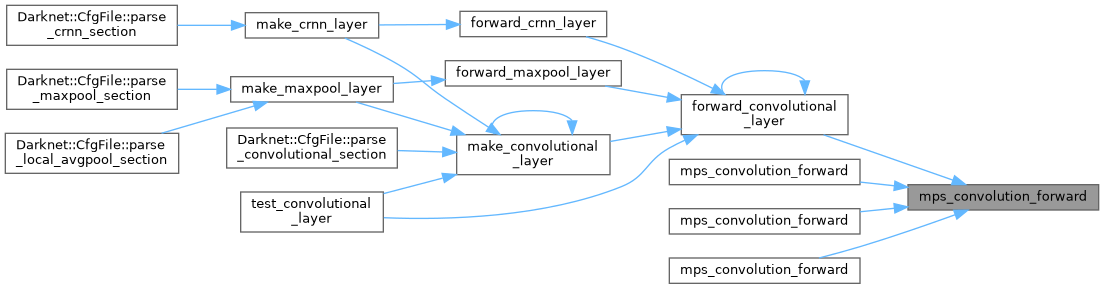

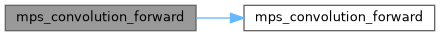

| bool | mps_convolution_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, bool defer_readback, bool *activation_applied, const char **reason) |

| | Try to execute convolution forward using MPS.

|

| |

| static bool | mps_convolution_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, const char **reason) |

| |

| static bool | mps_convolution_forward (const Darknet::Layer &l, const float *input, float *output) |

| |

| static bool | mps_convolution_forward (const Darknet::Layer &l, const float *input, float *output, const char **reason) |

| |

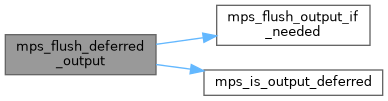

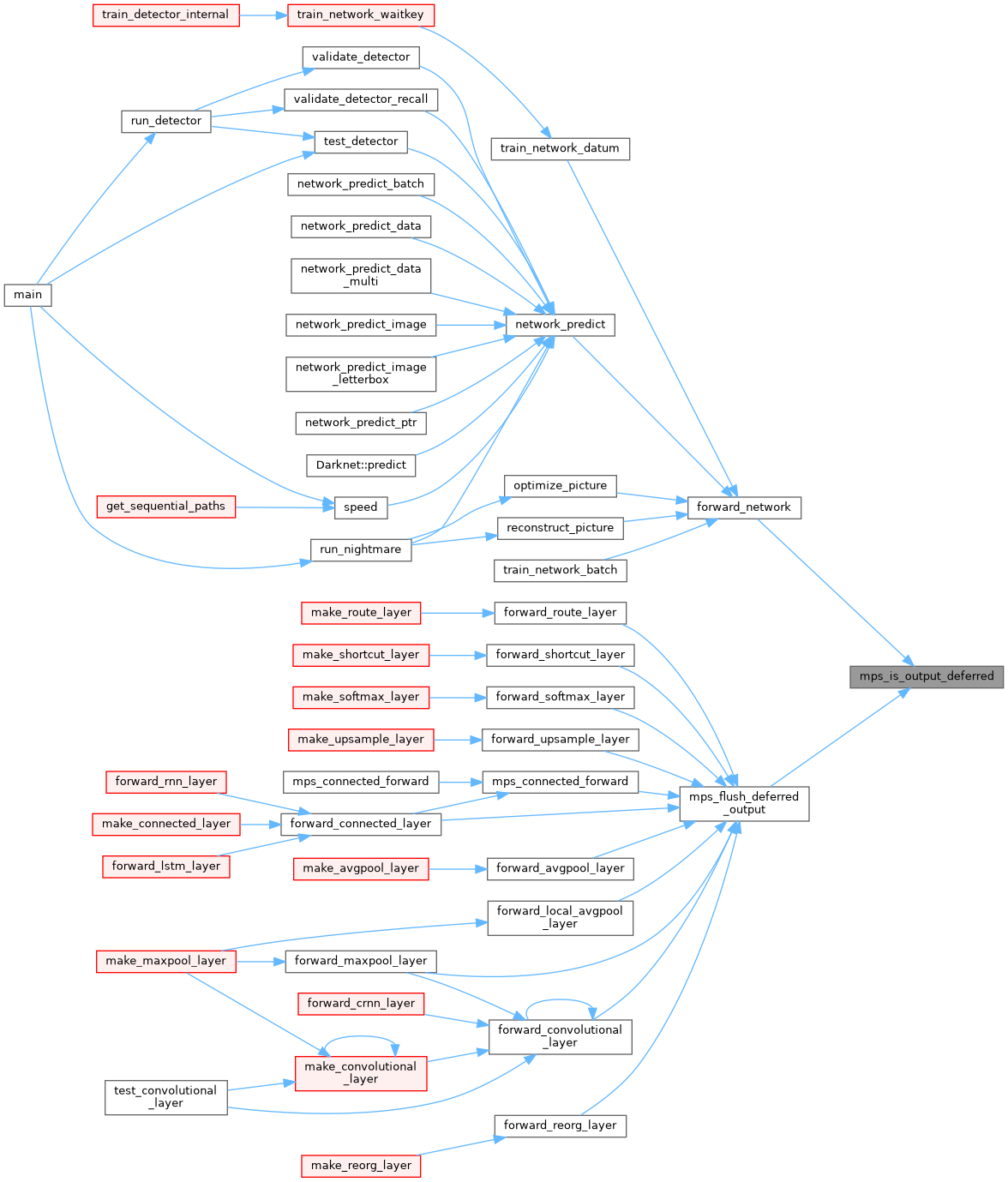

| void | mps_flush_deferred_output (const Darknet::Layer *layer) |

| |

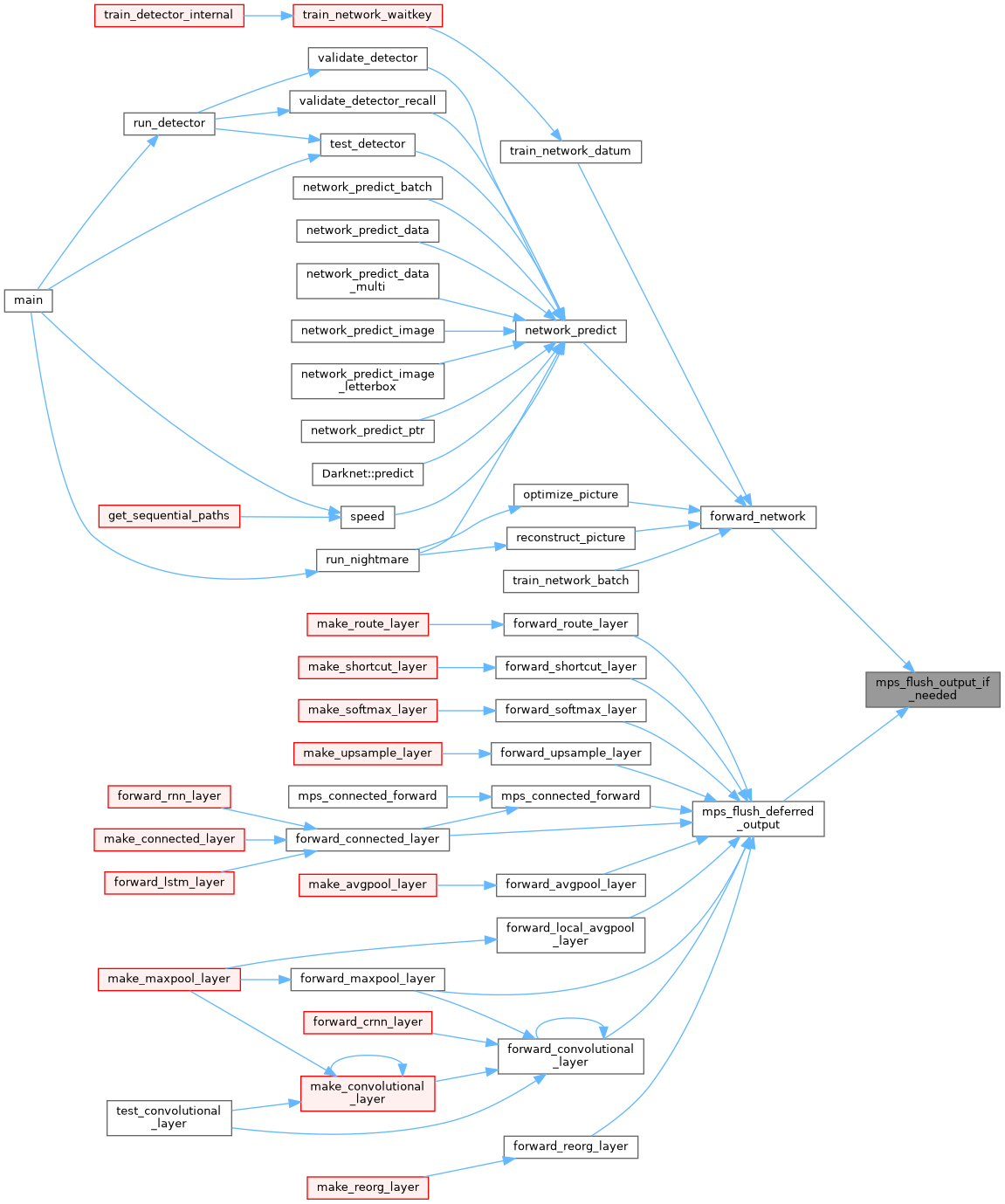

| void | mps_flush_output_if_needed (const Darknet::Layer *layer, float *output) |

| |

| bool | mps_gemm (int TA, int TB, int M, int N, int K, float ALPHA, float *A, int lda, float *B, int ldb, float BETA, float *C, int ldc) |

| | Try to execute GEMM using MPS.

|

| |

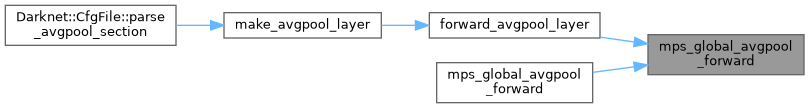

| bool | mps_global_avgpool_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, bool defer_readback, const char **reason) |

| | Try to execute global avgpool forward using MPS.

|

| |

| static bool | mps_global_avgpool_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, const char **reason) |

| |

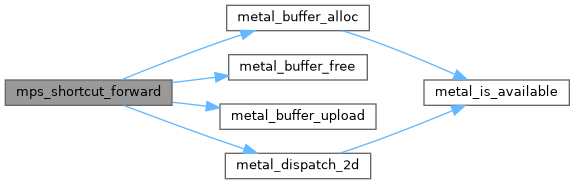

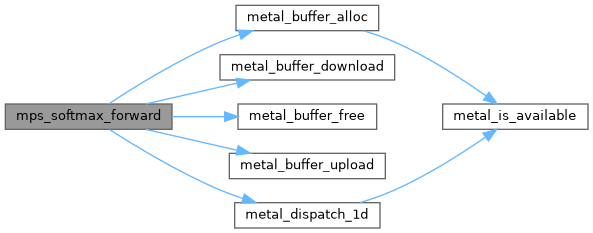

| bool | mps_is_available () |

| | Returns true if MPS is available and initialized.

|

| |

| bool | mps_is_output_deferred (const Darknet::Layer *layer) |

| |

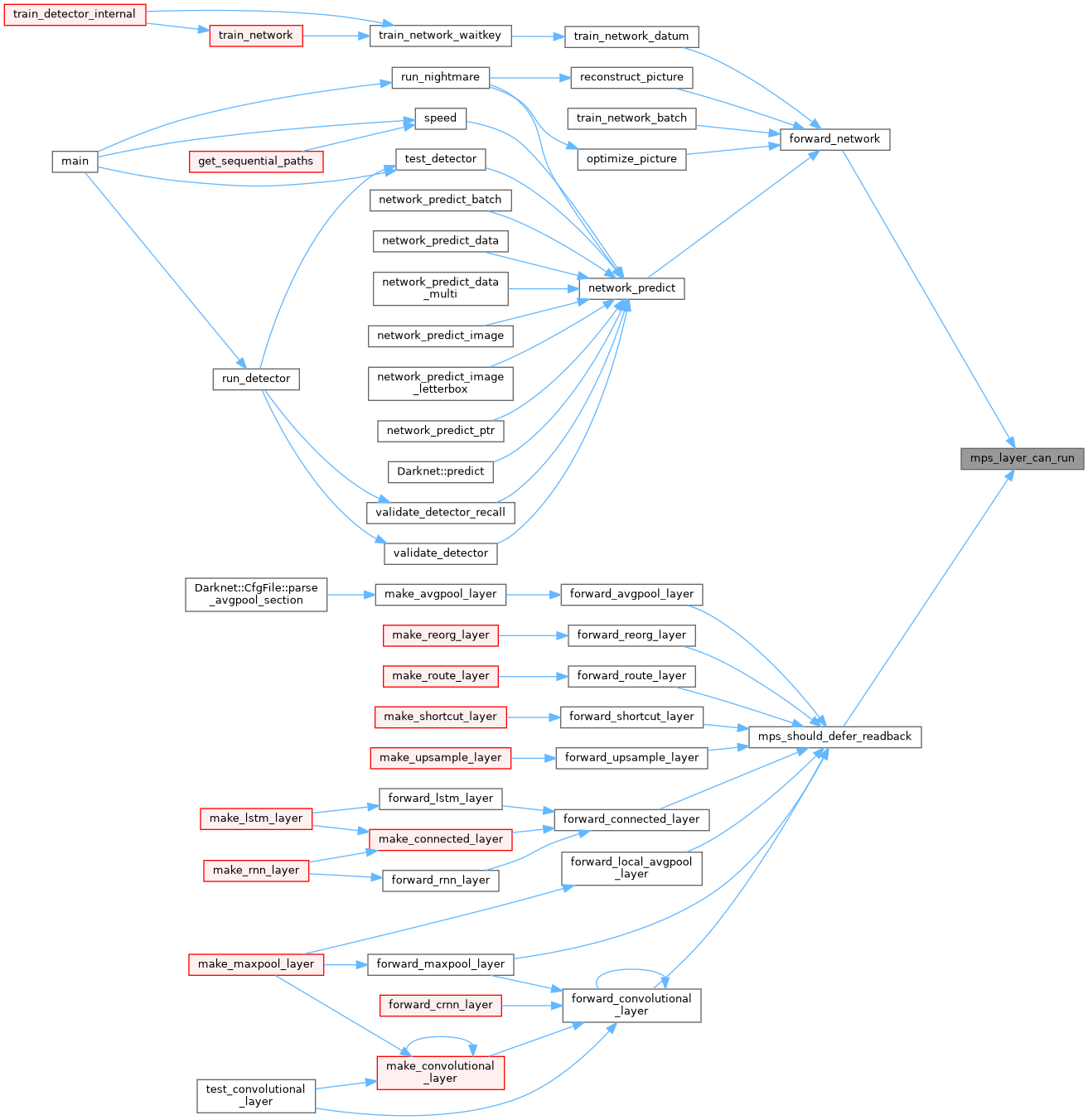

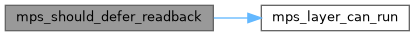

| bool | mps_layer_can_run (const Darknet::Layer &l, bool train) |

| |

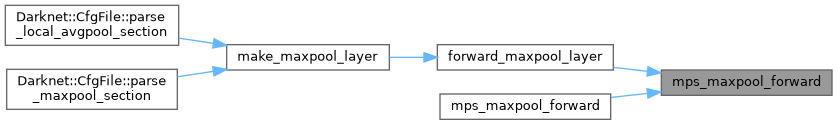

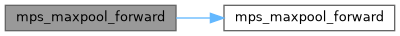

| bool | mps_maxpool_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, bool defer_readback, const char **reason) |

| |

| static bool | mps_maxpool_forward (const Darknet::Layer &l, const float *input, float *output) |

| |

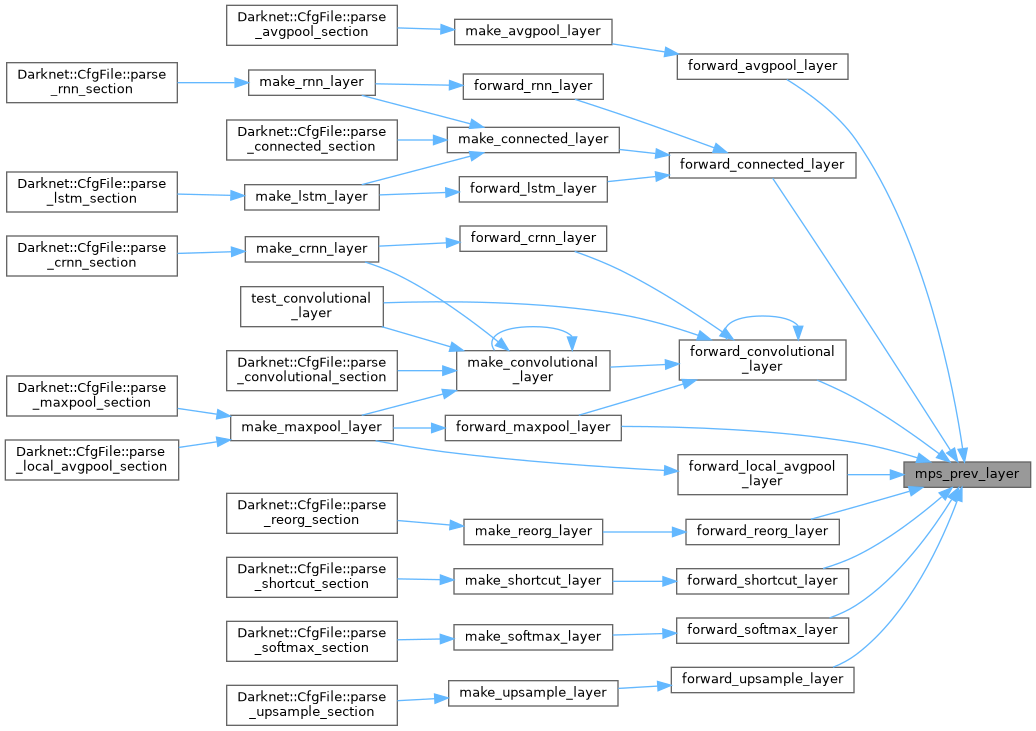

| const Darknet::Layer * | mps_prev_layer (const Darknet::NetworkState &state) |

| |

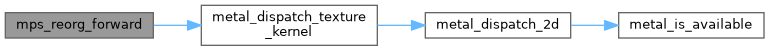

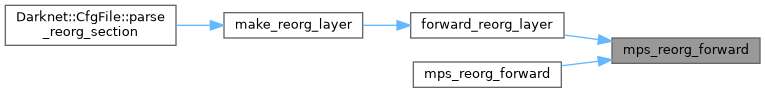

| bool | mps_reorg_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, bool defer_readback, const char **reason) |

| | Try to execute reorg using a Metal kernel on GPU.

|

| |

| static bool | mps_reorg_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, const char **reason) |

| |

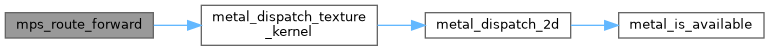

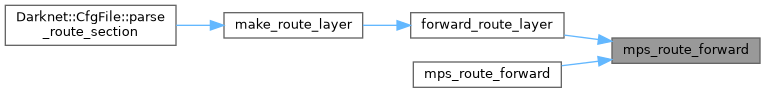

| bool | mps_route_forward (const Darknet::Layer &l, const Darknet::Network &net, float *output, bool defer_readback, const char **reason) |

| | Try to concatenate route inputs using MPS.

|

| |

| static bool | mps_route_forward (const Darknet::Layer &l, const Darknet::Network &net, float *output, const char **reason) |

| |

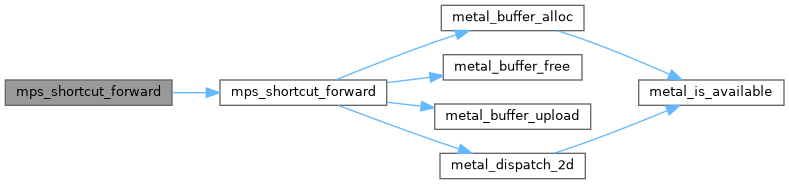

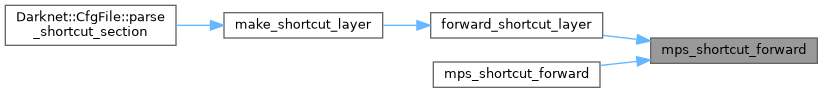

| static bool | mps_shortcut_forward (const Darknet::Layer &l, const Darknet::Layer *from, const float *input, float *output, const char **reason) |

| |

| bool | mps_shortcut_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const Darknet::Layer *from, const float *input, float *output, bool defer_readback, bool *activation_applied, const char **reason) |

| | Try to execute shortcut add using MPS.

|

| |

| bool | mps_should_defer_readback (const Darknet::NetworkState &state) |

| |

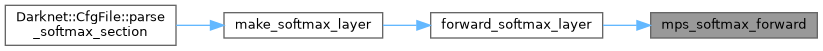

| bool | mps_softmax_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, const char **reason) |

| | Try to execute softmax on GPU.

|

| |

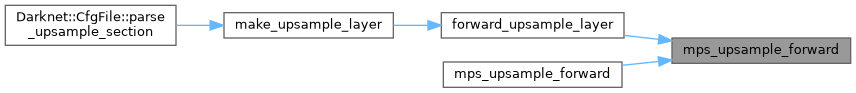

| bool | mps_upsample_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, bool defer_readback, const char **reason) |

| | Try to upsample using a Metal kernel on GPU.

|

| |

| static bool | mps_upsample_forward (const Darknet::Layer &l, const Darknet::Layer *prev, const float *input, float *output, const char **reason) |

| |

MPS/Metal inference entry points and helpers.